How to Build an AI Account Qualifier That Thinks Like Your Best Rep

How to build a target account list that goes beyond firmographic filters — capturing your best reps' ICP judgment so you can train, scale, and qualify accounts with AI

Most target account lists are built the same way: export from ZoomInfo, filter by firmographics, assign out to your reps. The list looks defensible, but it can be missing the most useful signals that match your ICP.

The problem isn’t the data provider. It’s that the criteria that actually determine fit — the stuff your best reps have been quietly applying for years — never makes it into the list at all. It lives in someone’s head. Filters can’t capture it. And until you write it down, you can’t train to it, scale it, or ask AI to help with it.

Here’s a process for building an account list that captures real judgment, not just spreadsheet logic.

Step 1: Acknowledge what your filters can’t do

Start by pulling your initial list from ZoomInfo, Sales Nav, or whatever data provider you use. Filter by the firmographics you know matter — size, industry, geography. This gives you a working universe.

Then sit with it honestly. There are companies on that list that don’t belong, and companies missing that should be there. That’s not a failure of the tool — it’s the ceiling of filters. Industry classifications don’t map neatly to your ICP. Revenue ranges don’t tell you anything about buying behavior. The raw list is a starting point, not a judgment.

This skill walks you through the entire process end to end. If you want to test each section first, we have included prompts to try along the way.

Prompt to try in Claude: “Here is my current ICP criteria: [paste your firmographic filters]. What are the types of companies that might pass these filters but still be a bad fit? What types of companies might not pass these filters but still be worth targeting? Explain your reasoning for each.”

This is a useful gut-check. It starts surfacing the criteria you’re already applying informally — the ones that never made it into your filter settings.

Step 2: Shadow your best rep(s) — and document what you see

This is the most important step, and the most skipped.

The team at MedScout figured this out early. Brian Aoyama and Mallory Blocker brought their entire team into a room, grabbed a few accounts from the CRM, hooked one of their best AEs up to the projector, hit record on Fathom, and asked him to walk through account evaluation out loud in real time. The team watched what tabs he opened, noticed where he lingered, and paid attention to what made him say “this is interesting” versus “this one’s not for us.”

They wrote up what they observed. Then they took it back to him and asked: what did we miss? The first pass always misses things that were instinctive. That conversation surfaced criteria he’d been applying for years without consciously thinking about them.

Once you have a recording of a session like this, feed the transcript into Claude:

Prompt to try: “Here’s a transcript of a rep walking through account evaluation. Pull out the criteria they applied, the signals they looked for, and anything they weighted heavily without naming explicitly. Flag any areas of uncertainty or unresolved questions. Structure it so we can bring it back to the rep to confirm and fill in what’s missing.”

The output of this step is a first documented draft of how your best person actually evaluates accounts — not invented top-down, but captured from real behavior. That’s worth building for two reasons: training new reps, and eventually serving as the foundation for AI to help qualify accounts at scale.

Step 3: Run your best and worst customers through the same lens

Pull at least three of your best-fit customers and three of your worst-fit or churned customers. For each one, ask: what made this a good or bad fit? What did we know — or could have known — before the deal closed?

You’re looking for the underlying traits beneath the surface patterns. Not just “they were a small company” but why that mattered. Was it budget constraints? Decision-making process? Risk tolerance? “Small company” is a data point. “Low risk appetite in a category they see as non-core” is a trait.

Prompt to try in Claude: “Here are three of our best-fit customers: [names + 2-3 sentences on each - what made them a good or bad fit, how the deal played out, anything you wish you’d known or asked about sooner]. Here are three that churned or were bad fits: [same]. Based on what I’ve shared, what traits seem to distinguish the two groups? What questions would you ask me to sharpen the distinction?”

Run this in rounds — one good, one bad, then another pair, then another. Each round tunes the lens. You end up with a set of real patterns grounded in your actual customer history, not your assumptions about who should be a good fit.

Step 4: Turn patterns into traits, and traits into signals

Now you have a list of good and bad patterns. The next step is leveling up: what are the underlying traits these patterns point to?

A trait is something like “openness to innovation” or “organizational stability” — not a single data point, but an attribute that matters for whether an account will succeed with your product. One trait might have multiple signals. Your job here is to get specific: for each trait, what would actually tell you it’s present?

Prompt to try in Claude: “Here are the patterns we identified across our best and worst customers: [list]. Help me group these into 4-6 underlying traits. For each trait, suggest 2-3 observable signals that would indicate it’s present or absent.”

This is where vague instincts become something you can actually work with — and eventually, something you can hand off.

Step 5: Map each signal to when and how you can find it

For each signal, you need to answer two questions: could you find evidence of this from outside-in research before a conversation? Or does it only surface during the sales process itself?

This matters because it shapes your list-building logic and your qualification workflow. Some signals are research-accessible — job postings, LinkedIn, news, a company’s website. Others only show up in discovery. Both are useful, but you need to know which is which before you can build a repeatable process around them.

Prompt to try in Claude: “Here are our qualification signals: [list]. For each one, help me identify: (1) whether this is findable through outside-in research or only through direct conversation, (2) where specifically you’d look to find it, and (3) what ‘good’ vs. ‘concerning’ looks like for each signal.”

The output is your qualification rubric: a structured document a new rep could follow, or that Claude could use to research and pre-qualify accounts at scale.

Ready to give it a go?

Download the skill here. It guides you through the process to produce a rubric for account qualification tailored to your business.

What good looks like

A finished rubric has 4-6 traits, each with 2-3 signals. For each signal, you know: what it is, why it matters to your business, what good looks like versus what’s concerning, and where to find it. It’s specific enough that two different people reviewing the same account would reach the same conclusion.

The practical test Brian and Mallory used at MedScout: could a new rep use this rubric to evaluate an account the way your best rep would? If yes, you’re done. If not, keep iterating.

Watch-outs

The first pass will feel incomplete. That’s normal — the goal of the shadowing step and the best/worst customer analysis is iteration, not perfection on round one. Don’t try to build the definitive rubric in a single session.

Don’t let the rubric drift into a yes/no checklist either. The value is in documented judgment with reasoning, not a binary score. You want to be able to defend why a signal matters and what you’re looking for — not just whether a box got checked.

The takeaway

A target account list built on filters alone is a starting point. What turns it into something your team actually trusts is the reasoning underneath it — both your ICP guidelines and the criteria your best reps apply instinctively, documented clearly enough that anyone can follow it.

That documentation is worth building for its own sake: it trains new reps, aligns the team, and creates a shared standard for what good looks like. And once it exists, it becomes the foundation for Claude to help — not replacing your judgment, but doing the research legwork against criteria you’ve already defined.

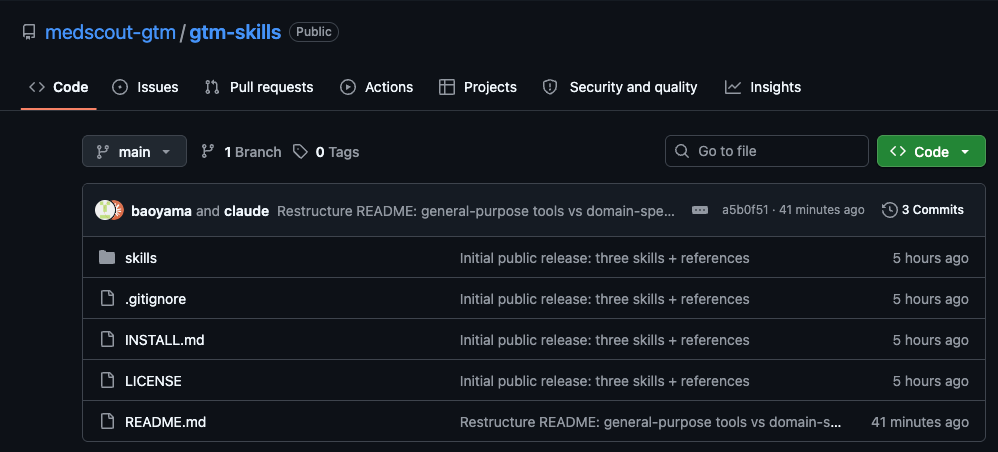

Special thanks to Brian Aoyama, VP Growth & Applied AI, and Mallory Blocker, Growth Marketing Lead, at MedScout for sharing their process and the hard-won lessons behind it.